使用kubeadm部署Kubernetes 1.29

📅 2023-12-27 | 🖱️

kubeadm是Kubernetes官方提供的用于快速安部署Kubernetes集群的工具。伴随Kubernetes每个版本的发布,kubeadm可能会对集群配置方面的一些实践做调整,通过实验kubeadm可以学习到Kubernetes官方在集群配置上一些新的最佳实践。

1.准备 #

1.1 系统配置 #

在安装之前,需要先做好如下准备。

3台Linux主机如下:

- node4 - Ubuntu 22.04

- node5 - openEuler release 22.03 (LTS-SP2)

- node6 - Rocky Linux release 8.8 (Green Obsidian)

1cat /etc/hosts

2192.168.96.154 node4

3192.168.96.155 node5

4192.168.96.156 node6

在各个主机上完成下面的系统配置。

如果系统启用了selinux,使用下面的命令禁用selinux:

1setenforce 0

2

3vi /etc/selinux/config

4SELINUX=disabled

如果各个主机启用了防火墙策略,需要开放Kubernetes各个组件所需要的端口,可以查看Ports and Protocols中的内容, 开放相关端口或者关闭主机的防火墙。

创建/etc/modules-load.d/containerd.conf配置文件,确保在系统启动时自动加载所需的内核模块,以满足容器运行时的要求:

1cat << EOF > /etc/modules-load.d/containerd.conf

2overlay

3br_netfilter

4EOF

执行以下命令使配置生效:

1modprobe overlay

2modprobe br_netfilter

创建/etc/sysctl.d/99-kubernetes-cri.conf配置文件:

1cat << EOF > /etc/sysctl.d/99-kubernetes-cri.conf

2net.bridge.bridge-nf-call-ip6tables = 1

3net.bridge.bridge-nf-call-iptables = 1

4net.ipv4.ip_forward = 1

5user.max_user_namespaces=28633

6EOF

执行以下命令使配置生效:

1sysctl -p /etc/sysctl.d/99-kubernetes-cri.conf

在文件名

/etc/sysctl.d/99-kubernetes-cri.conf中,“99” 代表文件的优先级或顺序。sysctl是Linux内核参数的配置工具,它可以通过修改/proc/sys/目录下的文件来设置内核参数。在/etc/sysctl.d/目录中,可以放置一系列的配置文件,以便在系统启动时自动加载这些参数。这些配置文件按照文件名的字母顺序逐个加载。数字前缀用于指定加载的顺序,较小的数字表示较高的优先级。

1.2 配置服务器支持开启ipvs的前提条件 #

由于ipvs已经加入到了内核的主干,所以为kube-proxy开启ipvs的前提需要加载以下的内核模块:

1ip_vs

2ip_vs_rr

3ip_vs_wrr

4ip_vs_sh

5nf_conntrack_ipv4

创建/etc/modules-load.d/ipvs.conf文件,保证在节点重启后能自动加载所需模块:

1cat > /etc/modules-load.d/ipvs.conf <<EOF

2ip_vs

3ip_vs_rr

4ip_vs_wrr

5ip_vs_sh

6EOF

执行以下命令使配置立即生效:

1modprobe ip_vs

2modprobe ip_vs_rr

3modprobe ip_vs_wrr

4modprobe ip_vs_sh

使用lsmod | grep -e ip_vs -e nf_conntrack命令查看是否已经正确加载所需的内核模块。

接下来还需要确保各个节点上已经安装了ipset软件包,为了便于查看ipvs的代理规则,最好安装一下管理工具ipvsadm。

在Ubuntu系统上执行:

1apt install -y ipset ipvsadm

在openEuler或Rocky Linux系统上执行:

1yum install -y ipset ipvsadm

如果不满足以上前提条件,即使kube-proxy的配置开启了ipvs模式,也会退回到iptables模式。

1.3 部署容器运行时Containerd #

在各个服务器节点上安装容器运行时Containerd。

下载Containerd的二进制包, 需要注意cri-containerd-(cni-)-VERSION-OS-ARCH.tar.gz发行包自containerd 1.6版本起已经被弃用,在某些 Linux 发行版上无法正常工作,并将在containerd 2.0版本中移除,这里下载containerd-<VERSION>-<OS>-<ARCH>.tar.gz的发行包,后边再单独下载安装runc和CNI plugins:

1wget https://github.com/containerd/containerd/releases/download/v1.7.11/containerd-1.7.11-linux-amd64.tar.gz

将其解压缩到/usr/local下:

1tar Cxzvf /usr/local containerd-1.7.11-linux-amd64.tar.gz

2

3bin/

4bin/containerd-shim-runc-v2

5bin/ctr

6bin/containerd-shim

7bin/containerd-shim-runc-v1

8bin/containerd-stress

9bin/containerd

接下来从runc的github上单独下载安装runc,该二进制文件是静态构建的,并且应该适用于任何Linux发行版。

1wget https://github.com/opencontainers/runc/releases/download/v1.1.9/runc.amd64

2install -m 755 runc.amd64 /usr/local/sbin/runc

接下来生成containerd的配置文件:

1mkdir -p /etc/containerd

2containerd config default > /etc/containerd/config.toml

根据文档Container runtimes中的内容,对于使用systemd作为init system的Linux的发行版,使用systemd作为容器的cgroup driver可以确保服务器节点在资源紧张的情况更加稳定,因此这里配置各个节点上containerd的cgroup driver为systemd。

修改前面生成的配置文件/etc/containerd/config.toml:

1[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

2 ...

3 [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

4 SystemdCgroup = true

再修改/etc/containerd/config.toml中的

1[plugins."io.containerd.grpc.v1.cri"]

2 ...

3 # sandbox_image = "registry.k8s.io/pause:3.8"

4 sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9"

为了通过systemd启动containerd,请还需要从https://raw.githubusercontent.com/containerd/containerd/main/containerd.service下载containerd.service单元文件,并将其放置在 /etc/systemd/system/containerd.service中。

1cat << EOF > /etc/systemd/system/containerd.service

2[Unit]

3Description=containerd container runtime

4Documentation=https://containerd.io

5After=network.target local-fs.target

6

7[Service]

8ExecStartPre=-/sbin/modprobe overlay

9ExecStart=/usr/local/bin/containerd

10

11Type=notify

12Delegate=yes

13KillMode=process

14Restart=always

15RestartSec=5

16

17# Having non-zero Limit*s causes performance problems due to accounting overhead

18# in the kernel. We recommend using cgroups to do container-local accounting.

19LimitNPROC=infinity

20LimitCORE=infinity

21

22# Comment TasksMax if your systemd version does not supports it.

23# Only systemd 226 and above support this version.

24TasksMax=infinity

25OOMScoreAdjust=-999

26

27[Install]

28WantedBy=multi-user.target

29EOF

配置containerd开机启动,并启动containerd,执行以下命令:

1systemctl daemon-reload

2systemctl enable containerd --now

3systemctl status containerd

下载安装crictl工具:

1wget https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.29.0/crictl-v1.29.0-linux-amd64.tar.gz

2tar -zxvf crictl-v1.29.0-linux-amd64.tar.gz

3install -m 755 crictl /usr/local/bin/crictl

使用crictl测试一下,确保可以打印出版本信息并且没有错误信息输出:

1crictl --runtime-endpoint=unix:///run/containerd/containerd.sock version

2

3Version: 0.1.0

4RuntimeName: containerd

5RuntimeVersion: v1.7.11

6RuntimeApiVersion: v1

2.使用kubeadm部署Kubernetes #

2.1 安装kubeadm和kubelet #

下面在各节点安装kubeadm和kubelet:

在Ubuntu系统上执行下面的命令:

1apt-get update

2apt-get install -y apt-transport-https ca-certificates curl gpg

3

4curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.29/deb/Release.key | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

5

6

7echo 'deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.29/deb/ /' | sudo tee /etc/apt/sources.list.d/kubernetes.list

8

9

10apt-get update

11

12apt install kubelet kubeadm kubectl

13

14apt-mark hold kubelet kubeadm kubectl

在openEuler和Rocky Linux系统中执行下面的命令:

1cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

2[kubernetes]

3name=Kubernetes

4baseurl=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/

5enabled=1

6gpgcheck=1

7gpgkey=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/repodata/repomd.xml.key

8exclude=kubelet kubeadm kubectl cri-tools kubernetes-cni

9EOF

10

11

12yum makecache

13yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

运行kubelet --help可以看到原来kubelet的绝大多数命令行flag参数都被DEPRECATED了,官方推荐我们使用--config指定配置文件,并在配置文件中指定原来这些flag所配置的内容。具体内容可以查看这里Set Kubelet parameters via a config file。最初Kubernetes这么做是为了支持动态Kubelet配置(Dynamic Kubelet Configuration),但动态Kubelet配置特性从k8s 1.22中已弃用,并在1.24中被移除。如果需要调整集群汇总所有节点kubelet的配置,还是推荐使用ansible等工具将配置分发到各个节点。

kubelet的配置文件必须是json或yaml格式,具体可查看这里。

在Kubernetes 1.22版本之前,Kubernetes没有为Linux系统提供NodeSwap功能,即默认要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动。

从Kubernetes 1.22开始引入了NodeSwap的Alpha支持,改功能在Kubernetes 1.28进入了Beta。

这里我们将激活NodeSwap功能,可以通过在启用NodeSwap feature gate来在节点上启用使用交换内存。此外,必须禁用failSwapOn配置设置,或者必须停用已弃用的--fail-swap-on命令行标志。

可以配置memorySwap.swapBehavior选项,以定义节点利用交换内存的方式。例如:

1# 此片段放入kubelet的配置文件

2memorySwap:

3 swapBehavior: UnlimitedSwap

swapBehavior的可用配置选项包括:

UnlimitedSwap(默认):Kubernetes工作负载可以使用它们请求的所有交换内存,最多达到系统限制。

LimitedSwap:Kubernetes工作负载对交换内存的利用受到限制。只允许使用Burstable QoS(可突发QoS)的Pod使用Swap。

如果没有为memorySwap配置,并且启用了NodeSwap特性门,kubelet将默认应用与UnlimitedSwap设置相同的行为。

注意,NodeSwap仅支持cgroup v2。对于Kubernetes v1.28,不再支持与cgroup v1一起使用swap。

可以使用下面命令查看系统支持的cgroup版本:

1grep cgroup /proc/filesystems

2nodev cgroup

3nodev cgroup2

2.2 使用kubeadm init初始化集群 #

在各节点开机启动kubelet服务:

1systemctl enable kubelet.service

使用kubeadm config print init-defaults --component-configs KubeletConfiguration可以打印集群初始化默认的使用的配置:

1apiVersion: kubeadm.k8s.io/v1beta3

2bootstrapTokens:

3- groups:

4 - system:bootstrappers:kubeadm:default-node-token

5 token: abcdef.0123456789abcdef

6 ttl: 24h0m0s

7 usages:

8 - signing

9 - authentication

10kind: InitConfiguration

11localAPIEndpoint:

12 advertiseAddress: 1.2.3.4

13 bindPort: 6443

14nodeRegistration:

15 criSocket: unix:///var/run/containerd/containerd.sock

16 imagePullPolicy: IfNotPresent

17 name: node

18 taints: null

19---

20apiServer:

21 timeoutForControlPlane: 4m0s

22apiVersion: kubeadm.k8s.io/v1beta3

23certificatesDir: /etc/kubernetes/pki

24clusterName: kubernetes

25controllerManager: {}

26dns: {}

27etcd:

28 local:

29 dataDir: /var/lib/etcd

30imageRepository: registry.k8s.io

31kind: ClusterConfiguration

32kubernetesVersion: 1.29.0

33networking:

34 dnsDomain: cluster.local

35 serviceSubnet: 10.96.0.0/12

36scheduler: {}

37---

38apiVersion: kubelet.config.k8s.io/v1beta1

39authentication:

40 anonymous:

41 enabled: false

42 webhook:

43 cacheTTL: 0s

44 enabled: true

45 x509:

46 clientCAFile: /etc/kubernetes/pki/ca.crt

47authorization:

48 mode: Webhook

49 webhook:

50 cacheAuthorizedTTL: 0s

51 cacheUnauthorizedTTL: 0s

52cgroupDriver: systemd

53clusterDNS:

54- 10.96.0.10

55clusterDomain: cluster.local

56containerRuntimeEndpoint: ""

57cpuManagerReconcilePeriod: 0s

58evictionPressureTransitionPeriod: 0s

59fileCheckFrequency: 0s

60healthzBindAddress: 127.0.0.1

61healthzPort: 10248

62httpCheckFrequency: 0s

63imageMaximumGCAge: 0s

64imageMinimumGCAge: 0s

65kind: KubeletConfiguration

66logging:

67 flushFrequency: 0

68 options:

69 json:

70 infoBufferSize: "0"

71 verbosity: 0

72memorySwap: {}

73nodeStatusReportFrequency: 0s

74nodeStatusUpdateFrequency: 0s

75resolvConf: /run/systemd/resolve/resolv.conf

76rotateCertificates: true

77runtimeRequestTimeout: 0s

78shutdownGracePeriod: 0s

79shutdownGracePeriodCriticalPods: 0s

80staticPodPath: /etc/kubernetes/manifests

81streamingConnectionIdleTimeout: 0s

82syncFrequency: 0s

83volumeStatsAggPeriod: 0s

从默认的配置中可以看到,可以使用imageRepository定制在集群初始化时拉取k8s所需镜像的地址。基于默认配置定制出本次使用kubeadm初始化集群所需的配置文件kubeadm.yaml:

1apiVersion: kubeadm.k8s.io/v1beta3

2kind: InitConfiguration

3localAPIEndpoint:

4 advertiseAddress: 192.168.96.154

5 bindPort: 6443

6nodeRegistration:

7 criSocket: unix:///run/containerd/containerd.sock

8 taints:

9 - effect: PreferNoSchedule

10 key: node-role.kubernetes.io/master

11---

12apiVersion: kubeadm.k8s.io/v1beta3

13kind: ClusterConfiguration

14kubernetesVersion: 1.29.0

15imageRepository: registry.aliyuncs.com/google_containers

16networking:

17 podSubnet: 10.244.0.0/16

18---

19apiVersion: kubelet.config.k8s.io/v1beta1

20kind: KubeletConfiguration

21cgroupDriver: systemd

22failSwapOn: false

23---

24apiVersion: kubeproxy.config.k8s.io/v1alpha1

25kind: KubeProxyConfiguration

26mode: ipvs

这里定制了imageRepository为阿里云的registry,避免因gcr被墙,无法直接拉取镜像。criSocket设置了容器运行时为containerd。

同时设置kubelet的cgroupDriver为systemd,设置kube-proxy代理模式为ipvs。

在开始初始化集群之前可以使用kubeadm config images pull --config kubeadm.yaml预先在各个服务器节点上拉取所k8s需要的容器镜像。

1kubeadm config images list --config kubeadm.yaml

2registry.aliyuncs.com/google_containers/kube-apiserver:v1.29.0

3registry.aliyuncs.com/google_containers/kube-controller-manager:v1.29.0

4registry.aliyuncs.com/google_containers/kube-scheduler:v1.29.0

5registry.aliyuncs.com/google_containers/kube-proxy:v1.29.0

6registry.aliyuncs.com/google_containers/coredns:v1.11.1

7registry.aliyuncs.com/google_containers/pause:3.9

8registry.aliyuncs.com/google_containers/etcd:3.5.10-0

9

10kubeadm config images pull --config kubeadm.yaml

11[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.29.0

12[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.29.0

13[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.29.0

14[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.29.0

15[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.11.1

16[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.9

17[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.5.10-0

接下来使用kubeadm初始化集群,选择node4作为Master Node,在node4上执行下面的命令:

1kubeadm init --config kubeadm.yaml

2

3[init] Using Kubernetes version: v1.29.0

4[preflight] Running pre-flight checks

5 [WARNING Swap]: swap is supported for cgroup v2 only; the NodeSwap feature gate of the kubelet is beta but disabled by default

6[preflight] Pulling images required for setting up a Kubernetes cluster

7[preflight] This might take a minute or two, depending on the speed of your internet connection

8[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

9[certs] Using certificateDir folder "/etc/kubernetes/pki"

10[certs] Using existing ca certificate authority

11[certs] Using existing apiserver certificate and key on disk

12[certs] Using existing apiserver-kubelet-client certificate and key on disk

13[certs] Using existing front-proxy-ca certificate authority

14[certs] Using existing front-proxy-client certificate and key on disk

15[certs] Using existing etcd/ca certificate authority

16[certs] Using existing etcd/server certificate and key on disk

17[certs] Using existing etcd/peer certificate and key on disk

18[certs] Using existing etcd/healthcheck-client certificate and key on disk

19[certs] Using existing apiserver-etcd-client certificate and key on disk

20[certs] Using the existing "sa" key

21[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

22[kubeconfig] Writing "admin.conf" kubeconfig file

23[kubeconfig] Writing "super-admin.conf" kubeconfig file

24[kubeconfig] Writing "kubelet.conf" kubeconfig file

25[kubeconfig] Writing "controller-manager.conf" kubeconfig file

26[kubeconfig] Writing "scheduler.conf" kubeconfig file

27[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

28[control-plane] Using manifest folder "/etc/kubernetes/manifests"

29[control-plane] Creating static Pod manifest for "kube-apiserver"

30[control-plane] Creating static Pod manifest for "kube-controller-manager"

31[control-plane] Creating static Pod manifest for "kube-scheduler"

32[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

33[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

34[kubelet-start] Starting the kubelet

35[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

36[apiclient] All control plane components are healthy after 12.006365 seconds

37[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

38[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

39[upload-certs] Skipping phase. Please see --upload-certs

40[mark-control-plane] Marking the node node4 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

41[mark-control-plane] Marking the node node4 as control-plane by adding the taints [node-role.kubernetes.io/master:PreferNoSchedule]

42[bootstrap-token] Using token: alhelp.oyjw8wk6zyw5b55p

43[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

44[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

45[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

46[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

47[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

48[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

49[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

50[addons] Applied essential addon: CoreDNS

51[addons] Applied essential addon: kube-proxy

52

53Your Kubernetes control-plane has initialized successfully!

54

55To start using your cluster, you need to run the following as a regular user:

56

57 mkdir -p $HOME/.kube

58 sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

59 sudo chown $(id -u):$(id -g) $HOME/.kube/config

60

61Alternatively, if you are the root user, you can run:

62

63 export KUBECONFIG=/etc/kubernetes/admin.conf

64

65You should now deploy a pod network to the cluster.

66Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

67 https://kubernetes.io/docs/concepts/cluster-administration/addons/

68

69Then you can join any number of worker nodes by running the following on each as root:

70

71kubeadm join 192.168.96.154:6443 --token alhelp.oyjw8wk6zyw5b55p \

72 --discovery-token-ca-cert-hash sha256:402b5d2d29367ada9b8ee2b37bfb246a318cbfce71d9e38c9117701455714f3e

上面记录了完成的初始化输出的内容,根据输出的内容基本上可以看出手动初始化安装一个Kubernetes集群所需要的关键步骤。 其中有以下关键内容:

[certs]生成相关的各种证书[kubeconfig]生成相关的kubeconfig文件[kubelet-start]生成kubelet的配置文件"/var/lib/kubelet/config.yaml"[control-plane]使用/etc/kubernetes/manifests目录中的yaml文件创建apiserver、controller-manager、scheduler的静态pod[bootstraptoken]生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到[addons]安装基本插件:CoreDNS,kube-proxy- 下面的命令是配置常规用户如何使用kubectl访问集群:

1mkdir -p $HOME/.kube 2sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config 3sudo chown $(id -u):$(id -g) $HOME/.kube/config - 最后给出了将另外2个节点加入集群的命令:

1kubeadm join 192.168.96.154:6443 --token alhelp.oyjw8wk6zyw5b55p \

2 --discovery-token-ca-cert-hash sha256:402b5d2d29367ada9b8ee2b37bfb246a318cbfce71d9e38c9117701455714f3e

查看一下集群状态,确认个组件都处于healthy状态,结果出现了错误:

1kubectl get cs

2

3Warning: v1 ComponentStatus is deprecated in v1.19+

4NAME STATUS MESSAGE ERROR

5controller-manager Healthy ok

6scheduler Healthy ok

7etcd-0 Healthy ok

8

9

10kubectl get --raw='/readyz?verbose'

11...

12readyz check passed

13

14kubectl get --raw='/livez?verbose'

15...

16livez check passed

集群初始化如果遇到问题,可以使用kubeadm reset命令进行清理。

2.3 安装包管理器helm 3 #

Helm是Kubernetes的包管理器,后续流程也将使用Helm安装Kubernetes的常用组件。 这里先在master节点node4上安装helm。

1wget https://get.helm.sh/helm-v3.13.3-linux-amd64.tar.gz

2tar -zxvf helm-v3.13.3-linux-amd64.tar.gz

3install -m 755 linux-amd64/helm /usr/local/bin/helm

执行helm list确认没有错误输出。

2.4 部署Pod Network组件Calico #

选择calico作为k8s的Pod网络组件,下面使用helm在k8s集群中安装calico。

下载tigera-operator的helm chart:

1wget https://github.com/projectcalico/calico/releases/download/v3.27.0/tigera-operator-v3.27.0.tgz

查看这个chart的中可定制的配置:

1helm show values tigera-operator-v3.27.0.tgz

2

3# imagePullSecrets is a special helm field which, when specified, creates a secret

4# containing the pull secret which is used to pull all images deployed by this helm chart and the resulting operator.

5# this field is a map where the key is the desired secret name and the value is the contents of the imagePullSecret.

6#

7# Example: --set-file imagePullSecrets.gcr=./pull-secret.json

8imagePullSecrets: {}

9

10installation:

11 enabled: true

12 kubernetesProvider: ""

13 # imagePullSecrets are configured on all images deployed by the tigera-operator.

14 # secrets specified here must exist in the tigera-operator namespace; they won't be created by the operator or helm.

15 # imagePullSecrets are a slice of LocalObjectReferences, which is the same format they appear as on deployments.

16 #

17 # Example: --set installation.imagePullSecrets[0].name=my-existing-secret

18 imagePullSecrets: []

19

20apiServer:

21 enabled: true

22

23certs:

24 node:

25 key:

26 cert:

27 commonName:

28 typha:

29 key:

30 cert:

31 commonName:

32 caBundle:

33

34# Resource requests and limits for the tigera/operator pod.

35resources: {}

36

37# Tolerations for the tigera/operator pod.

38tolerations:

39- effect: NoExecute

40 operator: Exists

41- effect: NoSchedule

42 operator: Exists

43

44# NodeSelector for the tigera/operator pod.

45nodeSelector:

46 kubernetes.io/os: linux

47

48# Custom annotations for the tigera/operator pod.

49podAnnotations: {}

50

51# Custom labels for the tigera/operator pod.

52podLabels: {}

53

54# Image and registry configuration for the tigera/operator pod.

55tigeraOperator:

56 image: tigera/operator

57 version: v1.32.3

58 registry: quay.io

59calicoctl:

60 image: docker.io/calico/ctl

61 tag: v3.27.0

62

63kubeletVolumePluginPath: /var/lib/kubelet

定制的values.yaml如下:

1# 可针对上面的配置进行定制,例如calico的镜像改成从私有库拉取。

2# 这里只是个人本地环境测试k8s新版本,因此只有下面几行配置

3apiServer:

4 enabled: false

5installation:

6 kubeletVolumePluginPath: None

使用helm安装calico:

1helm install calico tigera-operator-v3.27.0.tgz -n kube-system --create-namespace -f values.yaml

等待并确认所有pod处于Running状态:

1kubectl get pod -n kube-system | grep tigera-operator

2tigera-operator-55585899bf-qkr84 1/1 Running 0 26s

3

4kubectl get pods -n calico-system

5NAME READY STATUS RESTARTS AGE

6calico-kube-controllers-6784546df7-5dzld 1/1 Running 0 5m30s

7calico-node-24px9 1/1 Running 0 5m30s

8calico-typha-75854bc9c9-5zvrb 1/1 Running 0 5m31s

9csi-node-driver-mttxs 2/2 Running 0 5m30s

查看一下calico向k8s中添加的api资源:

1kubectl api-resources | grep calico

2bgpconfigurations crd.projectcalico.org/v1 false BGPConfiguration

3bgpfilters crd.projectcalico.org/v1 false BGPFilter

4bgppeers crd.projectcalico.org/v1 false BGPPeer

5blockaffinities crd.projectcalico.org/v1 false BlockAffinity

6caliconodestatuses crd.projectcalico.org/v1 false CalicoNodeStatus

7clusterinformations crd.projectcalico.org/v1 false ClusterInformation

8felixconfigurations crd.projectcalico.org/v1 false FelixConfiguration

9globalnetworkpolicies crd.projectcalico.org/v1 false GlobalNetworkPolicy

10globalnetworksets crd.projectcalico.org/v1 false GlobalNetworkSet

11hostendpoints crd.projectcalico.org/v1 false HostEndpoint

12ipamblocks crd.projectcalico.org/v1 false IPAMBlock

13ipamconfigs crd.projectcalico.org/v1 false IPAMConfig

14ipamhandles crd.projectcalico.org/v1 false IPAMHandle

15ippools crd.projectcalico.org/v1 false IPPool

16ipreservations crd.projectcalico.org/v1 false IPReservation

17kubecontrollersconfigurations crd.projectcalico.org/v1 false KubeControllersConfiguration

18networkpolicies crd.projectcalico.org/v1 true NetworkPolicy

19networksets crd.projectcalico.org/v1 true NetworkSet

这些api资源是属于calico的,因此不建议使用kubectl来管理,推荐按照calicoctl来管理这些api资源。 将calicoctl安装为kubectl的插件:

1cd /usr/local/bin

2curl -o kubectl-calico -O -L "https://github.com/projectcalico/calico/releases/download/v3.27.0/calicoctl-linux-amd64"

3chmod +x kubectl-calico

验证插件正常工作:

1kubectl calico -h

2.5 验证k8s DNS是否可用 #

1kubectl run curl --image=radial/busyboxplus:curl -it

2If you don't see a command prompt, try pressing enter.

3[ root@curl:/ ]$

进入后执行nslookup kubernetes.default确认解析正常:

1nslookup kubernetes.default

2Server: 10.96.0.10

3Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

4

5Name: kubernetes.default

6Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

2.6 向Kubernetes集群中添加Node节点 #

下面将node5, node6添加到Kubernetes集群中,分别在node5, node6上执行:

1kubeadm join 192.168.96.154:6443 --token alhelp.oyjw8wk6zyw5b55p \

2 --discovery-token-ca-cert-hash sha256:402b5d2d29367ada9b8ee2b37bfb246a318cbfce71d9e38c9117701455714f3e

node5和node6加入集群时遇到了如下问题,调度到node5(openEuler 22.03系统)或node6(Rock Linux 8.8)的calico-typha Pod无法启动,并报下面的错误:

1kubectl describe po calico-node-ht7cf -n calico-system

2...

3kubelet Failed to create pod sandbox: open /run/systemd/resolve/resolv.conf: no such file or directory

而/run/systemd/resolve/resolv.conf 文件是由systemd-resolved服务管理的。Ubuntu 22.04上默认安装并启动了这个服务。openEuler 22.03上没有安装这个服务。Rocky Linux 8.8上默认安装但没有启动这个服务。

下面node5上安装并启动systemd-resolved:

1yum install -y systemd-resolved

2systemctl enable systemd-resolved --now

3systemctl status systemd-resolved

在node6上启动systemd-resolved:

1systemctl enable systemd-resolved --now

2systemctl status systemd-resolved

之后3个节点上的calico相关pod全部启动正常:

1kubectl get po -n calico-system -o wide

2NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

3calico-kube-controllers-6784546df7-5dzld 1/1 Running 0 20m 10.244.3.67 node4 <none> <none>

4calico-node-24px9 1/1 Running 0 20m 192.168.96.154 node4 <none> <none>

5calico-node-ht7cf 1/1 Running 0 7m6s 192.168.96.155 node5 <none> <none>

6calico-node-tzql5 1/1 Running 0 7m4s 192.168.96.156 node6 <none> <none>

7calico-typha-75854bc9c9-5zvrb 1/1 Running 0 20m 192.168.96.154 node4 <none> <none>

8calico-typha-75854bc9c9-l8bmh 1/1 Running 0 6m56s 192.168.96.155 node5 <none> <none>

9csi-node-driver-2mk5s 2/2 Running 0 7m6s 10.244.33.129 node5 <none> <none>

10csi-node-driver-9qrzx 2/2 Running 0 7m4s 10.244.139.1 node6 <none> <none>

11csi-node-driver-mttxs 2/2 Running 0 20m 10.244.3.65 node4 <none> <none>

在master节点上执行命令查看集群中的节点(需要等待新加入节点上的calico-node pod启动正常):

1kubectl get node

2NAME STATUS ROLES AGE VERSION

3node4 Ready control-plane 34m v1.29.0

4node5 Ready <none> 7m31s v1.29.0

5node6 Ready <none> 7m29s v1.29.0

3.Kubernetes常用组件部署 #

3.1 使用Helm部署ingress-nginx #

为了便于将集群中的服务暴露到集群外部,需要使用Ingress。接下来使用Helm将ingress-nginx部署到Kubernetes上。 Nginx Ingress Controller被部署在Kubernetes的边缘节点上。

这里将node4(192.168.96.154)作为边缘节点,打上Label:

1kubectl label node node4 node-role.kubernetes.io/edge=

下载ingress-nginx的helm chart:

1wget https://github.com/kubernetes/ingress-nginx/releases/download/helm-chart-4.9.0/ingress-nginx-4.9.0.tgz

查看ingress-nginx-4.9.0.tgz这个chart的可定制配置:

1helm show values ingress-nginx-4.9.0.tgz

对values.yaml配置定制如下:

1controller:

2 ingressClassResource:

3 name: nginx

4 enabled: true

5 default: true

6 controllerValue: "k8s.io/ingress-nginx"

7 admissionWebhooks:

8 enabled: false

9 replicaCount: 1

10 image:

11 # registry: registry.k8s.io

12 # image: ingress-nginx/controller

13 # tag: "v1.9.5"

14 registry: docker.io

15 image: unreachableg/registry.k8s.io_ingress-nginx_controller

16 tag: "v1.9.5"

17 digest: sha256:bdc54c3e73dcec374857456559ae5757e8920174483882b9e8ff1a9052f96a35

18 hostNetwork: true

19 nodeSelector:

20 node-role.kubernetes.io/edge: ''

21 affinity:

22 podAntiAffinity:

23 requiredDuringSchedulingIgnoredDuringExecution:

24 - labelSelector:

25 matchExpressions:

26 - key: app

27 operator: In

28 values:

29 - nginx-ingress

30 - key: component

31 operator: In

32 values:

33 - controller

34 topologyKey: kubernetes.io/hostname

35 tolerations:

36 - key: node-role.kubernetes.io/master

37 operator: Exists

38 effect: NoSchedule

39 - key: node-role.kubernetes.io/master

40 operator: Exists

41 effect: PreferNoSchedule

nginx ingress controller的副本数replicaCount为1,将被调度到node4这个边缘节点上。这里并没有指定nginx ingress controller service的externalIPs,而是通过hostNetwork: true设置nginx ingress controller使用宿主机网络。

因为registry.k8s.io被墙,这里替换成unreachableg/registry.k8s.io_ingress-nginx_controller提前拉取一下镜像:

1crictl --runtime-endpoint=unix:///run/containerd/containerd.sock pull unreachableg/registry.k8s.io_ingress-nginx_controller:v1.9.5

1helm install ingress-nginx ingress-nginx-4.9.0.tgz --create-namespace -n ingress-nginx -f values.yaml

1kubectl get po -n ingress-nginx

2NAME READY STATUS RESTARTS AGE

3ingress-nginx-controller-6445445cb8-c4fh4 1/1 Running 0 74s

测试访问http://192.168.96.154返回默认的nginx 404页,则部署完成。

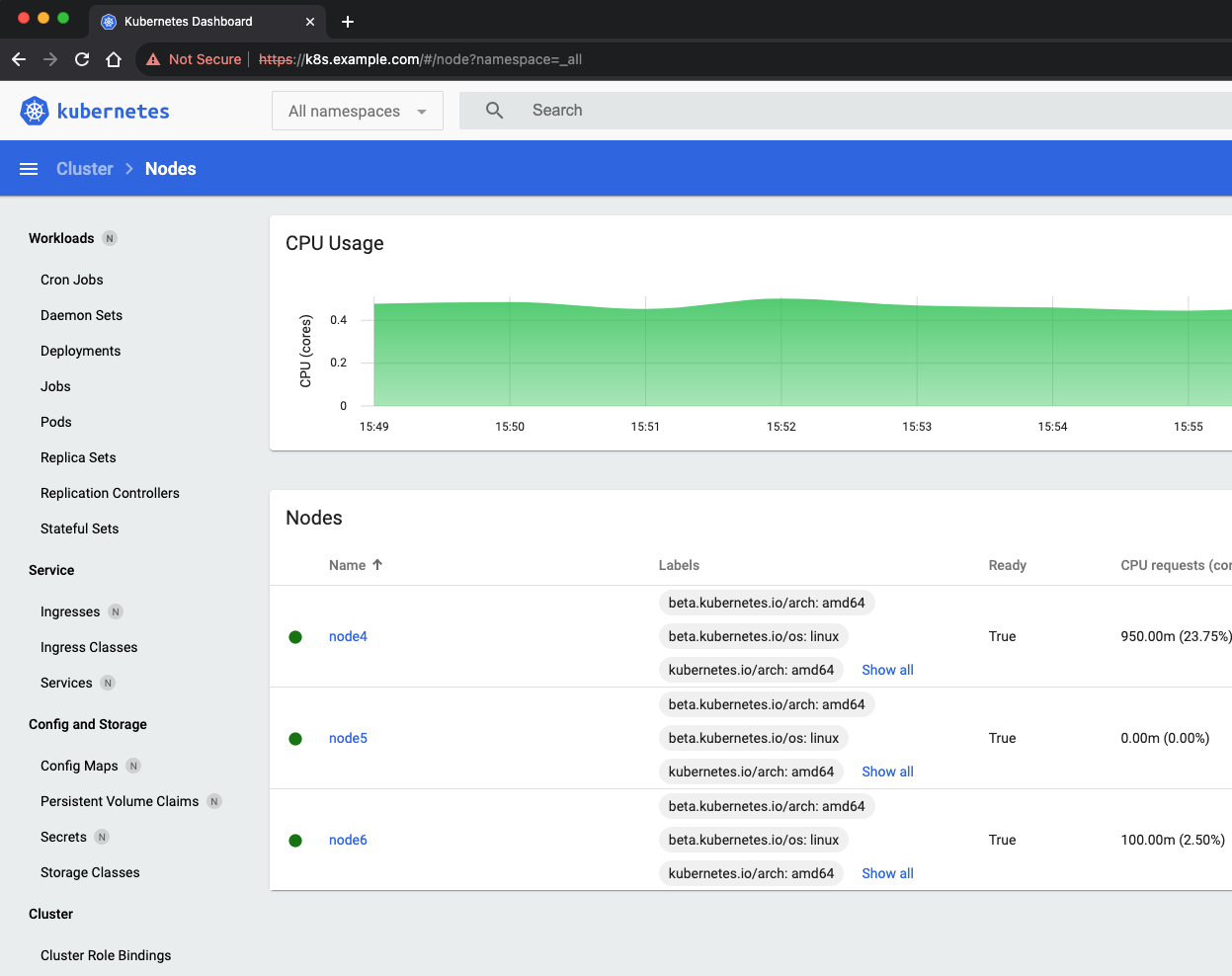

3.2 使用Helm部署dashboard #

先部署metrics-server:

1wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.6.4/components.yaml

修改components.yaml中的image为docker.io/unreachableg/k8s.gcr.io_metrics-server_metrics-server:v0.6.4。

修改components.yaml中容器的启动参数,加入--kubelet-insecure-tls。

1kubectl apply -f components.yaml

metrics-server的pod正常启动后,等一段时间就可以使用kubectl top查看集群和pod的metrics信息:

1kubectl top node

2NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

3node4 373m 9% 2184Mi 27%

4node5 42m 1% 968Mi 12%

5node6 131m 3% 918Mi 12%

6

7kubectl top pod -n kube-system

8NAME CPU(cores) MEMORY(bytes)

9coredns-857d9ff4c9-5pvft 6m 13Mi

10coredns-857d9ff4c9-zkmm6 5m 13Mi

11etcd-node4 62m 58Mi

12kube-apiserver-node4 154m 350Mi

13kube-controller-manager-node4 35m 45Mi

14kube-proxy-4qfvt 31m 19Mi

15kube-proxy-98k25 9m 17Mi

16kube-proxy-rbh22 9m 18Mi

17kube-scheduler-node4 9m 16Mi

18metrics-server-7d686f4d9d-pxn8g 13m 18Mi

19tigera-operator-55585899bf-qkr84 5m 28Mi

接下来使用helm部署k8s的dashboard。当前k8s dashboard已经更新到了v3.0.0-alpha0,这里体验一下v3版本。

从k8s dashboard的v3版本开始,底层架构已更改,需要进行干净的安装,如果是在做升级dashboard操作,请首先移除先前的安装,这里是全新安装可以忽略。

k8s dashboard的v3版本现在默认使用cert-manager和nginx-ingress-controller。如果选择基于yaml清单的安装,请确保在集群中已安装它们。

我们前面已经安装了nginx-ingress-controller,下面先安装cert-manager:

1wget https://github.com/cert-manager/cert-manager/releases/download/v1.13.3/cert-manager.yaml

2

3kubectl apply -f cert-manager.yaml

确保cert-manager的所有pod启动正常:

1kubectl get po -n cert-manager

2NAME READY STATUS RESTARTS AGE

3cert-manager-6774cd657f-q9qpf 1/1 Running 0 102s

4cert-manager-cainjector-55c8b7b49b-vf8r4 1/1 Running 0 102s

5cert-manager-webhook-57797c469d-cgw4n 1/1 Running 0 102s

下载dashboard的yaml清单文件:

1wget https://raw.githubusercontent.com/kubernetes/dashboard/v3.0.0-alpha0/charts/kubernetes-dashboard.yaml

编辑kubernetes-dashboard.yaml清单文件,将其中的ingress中的host替换想分配给k8s dashboard的域名:

1kind: Ingress

2apiVersion: networking.k8s.io/v1

3metadata:

4 name: kubernetes-dashboard

5 namespace: kubernetes-dashboard

6 labels:

7 app.kubernetes.io/name: nginx-ingress

8 app.kubernetes.io/part-of: kubernetes-dashboard

9 annotations:

10 nginx.ingress.kubernetes.io/ssl-redirect: "true"

11 cert-manager.io/issuer: selfsigned

12spec:

13 ingressClassName: nginx

14 tls:

15 - hosts:

16 - localhost

17 secretName: kubernetes-dashboard-certs

18 rules:

19 - host: k8s.example.com

20 http:

21 paths:

22 - path: /

23 pathType: Prefix

24 backend:

25 service:

26 name: kubernetes-dashboard-web

27 port:

28 name: web

29 - path: /api

30 pathType: Prefix

31 backend:

32 service:

33 name: kubernetes-dashboard-api

34 port:

35 name: api

这里将k8s以ingress暴露到k8s集群外边,这里模拟真实环境中以域名形式访问暴露的服务。k8s dashboard的ingress中配置的域名为k8s.example.com,实际上需要客户端浏览器电脑的DNS可以解析到ingress controller(这里是192.168.96.154)上。

如果没有DNS,可以手动在客户端浏览器电脑设置hosts配置192.168.96.154 k8s.example.com。

安装dashboard的yaml清单文件:

1kubectl apply -f kubernetes-dashboard.yaml

确认dashboard的相关Pod启动正常:

1kubectl get po -n kubernetes-dashboard

2NAME READY STATUS RESTARTS AGE

3kubernetes-dashboard-api-8586787f7-txzdx 1/1 Running 0 3m40s

4kubernetes-dashboard-metrics-scraper-6959b784dc-424p5 1/1 Running 0 3m40s

5kubernetes-dashboard-web-6b6d549b4-jcp2l 1/1 Running 0 3m40s

6

7kubectl get ingress -n kubernetes-dashboard

8NAME CLASS HOSTS ADDRESS PORTS AGE

9kubernetes-dashboard nginx k8s.example.com 80, 443 3m49s

创建管理员sa:

1kubectl create serviceaccount kube-dashboard-admin-sa -n kube-system

2

3kubectl create clusterrolebinding kube-dashboard-admin-sa \

4--clusterrole=cluster-admin --serviceaccount=kube-system:kube-dashboard-admin-sa

创建集群管理员登录dashboard所需token:

1kubectl create token kube-dashboard-admin-sa -n kube-system --duration=87600h

2

3eyJhbGciOiJSUzI1NiIsImtpZCI6Im5SWVpMcGZMcHFjYVdFcFNzX2kwTmwxYUx2M2NRckU5MFJBUmpSLW1fV28ifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoyMDE5MTExNjI5LCJpYXQiOjE3MDM3NTE2MjksImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJrdWJlLWRhc2hib2FyZC1hZG1pbi1zYSIsInVpZCI6ImQ1YzZiMDdmLWUzMDAtNDMzOS04ZDY1LTUwYzg0N2FjMjg2MCJ9fSwibmJmIjoxNzAzNzUxNjI5LCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06a3ViZS1kYXNoYm9hcmQtYWRtaW4tc2EifQ.EOFDNd0GvXjJpoUYFjOKDhuEbSJgLn6RuQeBgwjN-C4lR5C0URwXVarDUmGJTJZiAcHsajM1RGmR9u26vFvh9ZKTaQOkpJKYvJACiUwiOFZzGv_j2Cc5erZbiJskNMzl_Yt_fyACDpZpB20pjtT5e91C5Z7NPdgHbQsKt0Nkj6iLoIrGDihWBUEl33v1q1JixYyvtr9v2TcmmT8kQDmwluIsetW2TwN17ZVD1wsVz9iRgu0xwEWgzKh9FebQKJOsMmKWerca9ov_PD62ppElR0553-spgjjxow-rZ4mxn3u5M-dPfX57yIBQjczCd3jyEDedMs_RmRxUz_rtebdQAw

使用上面的token登录k8s dashboard。