使用kubeadm部署Kubernetes 1.24

📅 2022-05-25 | 🖱️

kubeadm是Kubernetes官方提供的用于快速安部署Kubernetes集群的工具,伴随Kubernetes每个版本的发布都会同步更新,kubeadm会对集群配置方面的一些实践做调整,通过实验kubeadm可以学习到Kubernetes官方在集群配置上一些新的最佳实践。

1.准备 #

1.1 系统配置 #

在安装之前,需要先做好如下准备。3台CentOS 7.9主机如下:

1cat /etc/hosts

2192.168.96.151 node1

3192.168.96.152 node2

4192.168.96.153 node3

在各个主机上完成下面的系统配置。

如果各个主机启用了防火墙策略,需要开放Kubernetes各个组件所需要的端口,可以查看Ports and Protocols中的内容, 开放相关端口或者关闭主机的防火墙。

禁用SELINUX:

1setenforce 0

1vi /etc/selinux/config

2SELINUX=disabled

创建/etc/modules-load.d/containerd.conf配置文件:

1cat << EOF > /etc/modules-load.d/containerd.conf

2overlay

3br_netfilter

4EOF

执行以下命令使配置生效:

1modprobe overlay

2modprobe br_netfilter

创建/etc/sysctl.d/99-kubernetes-cri.conf配置文件:

1cat << EOF > /etc/sysctl.d/99-kubernetes-cri.conf

2net.bridge.bridge-nf-call-ip6tables = 1

3net.bridge.bridge-nf-call-iptables = 1

4net.ipv4.ip_forward = 1

5user.max_user_namespaces=28633

6EOF

执行以下命令使配置生效:

1sysctl -p /etc/sysctl.d/99-kubernetes-cri.conf

1.2 配置服务器支持开启ipvs的前提条件 #

由于ipvs已经加入到了内核的主干,所以为kube-proxy开启ipvs的前提需要加载以下的内核模块:

1ip_vs

2ip_vs_rr

3ip_vs_wrr

4ip_vs_sh

5nf_conntrack_ipv4

在各个服务器节点上执行以下脚本:

1cat > /etc/sysconfig/modules/ipvs.modules <<EOF

2#!/bin/bash

3modprobe -- ip_vs

4modprobe -- ip_vs_rr

5modprobe -- ip_vs_wrr

6modprobe -- ip_vs_sh

7modprobe -- nf_conntrack_ipv4

8EOF

9chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。 使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

接下来还需要确保各个节点上已经安装了ipset软件包,为了便于查看ipvs的代理规则,最好安装一下管理工具ipvsadm。

1yum install -y ipset ipvsadm

如果不满足以上前提条件,则即使kube-proxy的配置开启了ipvs模式,也会退回到iptables模式。

1.3 部署容器运行时Containerd #

在各个服务器节点上安装容器运行时Containerd。

下载Containerd的二进制包:

1wget https://github.com/containerd/containerd/releases/download/v1.6.4/cri-containerd-cni-1.6.4-linux-amd64.tar.gz

cri-containerd-cni-1.6.4-linux-amd64.tar.gz压缩包中已经按照官方二进制部署推荐的目录结构布局好。

里面包含了systemd配置文件,containerd以及cni的部署文件。

将解压缩到系统的根目录/中:

1tar -zxvf cri-containerd-cni-1.6.4-linux-amd64.tar.gz -C /

2

3etc/

4etc/systemd/

5etc/systemd/system/

6etc/systemd/system/containerd.service

7etc/crictl.yaml

8etc/cni/

9etc/cni/net.d/

10etc/cni/net.d/10-containerd-net.conflist

11usr/

12usr/local/

13usr/local/sbin/

14usr/local/sbin/runc

15usr/local/bin/

16usr/local/bin/critest

17usr/local/bin/containerd-shim

18usr/local/bin/containerd-shim-runc-v1

19usr/local/bin/ctd-decoder

20usr/local/bin/containerd

21usr/local/bin/containerd-shim-runc-v2

22usr/local/bin/containerd-stress

23usr/local/bin/ctr

24usr/local/bin/crictl

25......

26opt/cni/

27opt/cni/bin/

28opt/cni/bin/bridge

29......

注意经测试cri-containerd-cni-1.6.4-linux-amd64.tar.gz包中包含的runc在CentOS 7下的动态链接有问题,这里从runc的github上单独下载runc,并替换上面安装的containerd中的runc:

1wget https://github.com/opencontainers/runc/releases/download/v1.1.2/runc.amd64

接下来生成containerd的配置文件:

1mkdir -p /etc/containerd

2containerd config default > /etc/containerd/config.toml

根据文档Container runtimes 中的内容,对于使用systemd作为init system的Linux的发行版,使用systemd作为容器的cgroup driver可以确保服务器节点在资源紧张的情况更加稳定,因此这里配置各个节点上containerd的cgroup driver为systemd。

修改前面生成的配置文件/etc/containerd/config.toml:

1[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

2 ...

3 [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

4 SystemdCgroup = true

再修改/etc/containerd/config.toml中的

1[plugins."io.containerd.grpc.v1.cri"]

2 ...

3 # sandbox_image = "k8s.gcr.io/pause:3.6"

4 sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.7"

配置containerd开机启动,并启动containerd

1systemctl enable containerd --now

使用crictl测试一下,确保可以打印出版本信息并且没有错误信息输出:

1crictl version

2Version: 0.1.0

3RuntimeName: containerd

4RuntimeVersion: v1.6.4

5RuntimeApiVersion: v1alpha2

2.使用kubeadm部署Kubernetes #

2.1 安装kubeadm和kubelet #

下面在各节点安装kubeadm和kubelet:

1cat <<EOF > /etc/yum.repos.d/kubernetes.repo

2[kubernetes]

3name=Kubernetes

4baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

5enabled=1

6gpgcheck=1

7repo_gpgcheck=0

8gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

9 http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

10EOF

1yum makecache fast

2yum install kubelet kubeadm kubectl

运行kubelet --help可以看到原来kubelet的绝大多数命令行flag参数都被DEPRECATED了,官方推荐我们使用--config指定配置文件,并在配置文件中指定原来这些flag所配置的内容。具体内容可以查看这里Set Kubelet parameters via a config file。最初Kubernetes这么做是为了支持动态Kubelet配置(Dynamic Kubelet Configuration),但动态Kubelet配置特性从k8s 1.22中已弃用,并在1.24中被移除。如果需要调整集群汇总所有节点kubelet的配置,还是推荐使用ansible等工具将配置分发到各个节点。

kubelet的配置文件必须是json或yaml格式,具体可查看这里。

Kubernetes 1.8开始要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动。 关闭系统的Swap方法如下:

1swapoff -a

修改 /etc/fstab 文件,注释掉 SWAP 的自动挂载,使用free -m确认swap已经关闭。

swappiness参数调整,修改/etc/sysctl.d/99-kubernetes-cri.conf添加下面一行:

1vm.swappiness=0

执行sysctl -p /etc/sysctl.d/99-kubernetes-cri.conf使修改生效。

2.2 使用kubeadm init初始化集群 #

在各节点开机启动kubelet服务:

1systemctl enable kubelet.service

使用kubeadm config print init-defaults --component-configs KubeletConfiguration可以打印集群初始化默认的使用的配置:

1apiVersion: kubeadm.k8s.io/v1beta3

2bootstrapTokens:

3- groups:

4 - system:bootstrappers:kubeadm:default-node-token

5 token: abcdef.0123456789abcdef

6 ttl: 24h0m0s

7 usages:

8 - signing

9 - authentication

10kind: InitConfiguration

11localAPIEndpoint:

12 advertiseAddress: 1.2.3.4

13 bindPort: 6443

14nodeRegistration:

15 criSocket: unix:///var/run/containerd/containerd.sock

16 imagePullPolicy: IfNotPresent

17 name: node

18 taints: null

19---

20apiServer:

21 timeoutForControlPlane: 4m0s

22apiVersion: kubeadm.k8s.io/v1beta3

23certificatesDir: /etc/kubernetes/pki

24clusterName: kubernetes

25controllerManager: {}

26dns: {}

27etcd:

28 local:

29 dataDir: /var/lib/etcd

30imageRepository: k8s.gcr.io

31kind: ClusterConfiguration

32kubernetesVersion: 1.24.0

33networking:

34 dnsDomain: cluster.local

35 serviceSubnet: 10.96.0.0/12

36scheduler: {}

37---

38apiVersion: kubelet.config.k8s.io/v1beta1

39authentication:

40 anonymous:

41 enabled: false

42 webhook:

43 cacheTTL: 0s

44 enabled: true

45 x509:

46 clientCAFile: /etc/kubernetes/pki/ca.crt

47authorization:

48 mode: Webhook

49 webhook:

50 cacheAuthorizedTTL: 0s

51 cacheUnauthorizedTTL: 0s

52cgroupDriver: systemd

53clusterDNS:

54- 10.96.0.10

55clusterDomain: cluster.local

56cpuManagerReconcilePeriod: 0s

57evictionPressureTransitionPeriod: 0s

58fileCheckFrequency: 0s

59healthzBindAddress: 127.0.0.1

60healthzPort: 10248

61httpCheckFrequency: 0s

62imageMinimumGCAge: 0s

63kind: KubeletConfiguration

64logging:

65 flushFrequency: 0

66 options:

67 json:

68 infoBufferSize: "0"

69 verbosity: 0

70memorySwap: {}

71nodeStatusReportFrequency: 0s

72nodeStatusUpdateFrequency: 0s

73rotateCertificates: true

74runtimeRequestTimeout: 0s

75shutdownGracePeriod: 0s

76shutdownGracePeriodCriticalPods: 0s

77staticPodPath: /etc/kubernetes/manifests

78streamingConnectionIdleTimeout: 0s

79syncFrequency: 0s

80volumeStatsAggPeriod: 0s

从默认的配置中可以看到,可以使用imageRepository定制在集群初始化时拉取k8s所需镜像的地址。基于默认配置定制出本次使用kubeadm初始化集群所需的配置文件kubeadm.yaml:

1apiVersion: kubeadm.k8s.io/v1beta3

2kind: InitConfiguration

3localAPIEndpoint:

4 advertiseAddress: 192.168.96.151

5 bindPort: 6443

6nodeRegistration:

7 criSocket: unix:///run/containerd/containerd.sock

8 taints:

9 - effect: PreferNoSchedule

10 key: node-role.kubernetes.io/master

11---

12apiVersion: kubeadm.k8s.io/v1beta2

13kind: ClusterConfiguration

14kubernetesVersion: v1.24.0

15imageRepository: registry.aliyuncs.com/google_containers

16networking:

17 podSubnet: 10.244.0.0/16

18---

19apiVersion: kubelet.config.k8s.io/v1beta1

20kind: KubeletConfiguration

21cgroupDriver: systemd

22failSwapOn: false

23---

24apiVersion: kubeproxy.config.k8s.io/v1alpha1

25kind: KubeProxyConfiguration

26mode: ipvs

这里定制了imageRepository为阿里云的registry,避免因gcr被墙,无法直接拉取镜像。criSocket设置了容器运行时为containerd。

同时设置kubelet的cgroupDriver为systemd,设置kube-proxy代理模式为ipvs。

在开始初始化集群之前可以使用kubeadm config images pull --config kubeadm.yaml预先在各个服务器节点上拉取所k8s需要的容器镜像。

1kubeadm config images pull --config kubeadm.yaml

2[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.0

3[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.24.0

4[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.24.0

5[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.24.0

6[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.7

7[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.5.3-0

8[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.8.6

接下来使用kubeadm初始化集群,选择node1作为Master Node,在node1上执行下面的命令:

1kubeadm init --config kubeadm.yaml

2W0526 10:22:26.657615 24076 common.go:83] your configuration file uses a deprecated API spec: "kubeadm.k8s.io/v1beta2". Please use 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

3W0526 10:22:26.660300 24076 initconfiguration.go:120] Usage of CRI endpoints without URL scheme is deprecated and can cause kubelet errors in the future. Automatically prepending scheme "unix" to the "criSocket" with value "/run/containerd/containerd.sock". Please update your configuration!

4[init] Using Kubernetes version: v1.24.0

5[preflight] Running pre-flight checks

6 [WARNING Swap]: swap is enabled; production deployments should disable swap unless testing the NodeSwap feature gate of the kubelet

7[preflight] Pulling images required for setting up a Kubernetes cluster

8[preflight] This might take a minute or two, depending on the speed of your internet connection

9[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

10[certs] Using certificateDir folder "/etc/kubernetes/pki"

11[certs] Generating "ca" certificate and key

12[certs] Generating "apiserver" certificate and key

13[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local node1] and IPs [10.96.0.1 192.168.96.151]

14[certs] Generating "apiserver-kubelet-client" certificate and key

15[certs] Generating "front-proxy-ca" certificate and key

16[certs] Generating "front-proxy-client" certificate and key

17[certs] Generating "etcd/ca" certificate and key

18[certs] Generating "etcd/server" certificate and key

19[certs] etcd/server serving cert is signed for DNS names [localhost node1] and IPs [192.168.96.151 127.0.0.1 ::1]

20[certs] Generating "etcd/peer" certificate and key

21[certs] etcd/peer serving cert is signed for DNS names [localhost node1] and IPs [192.168.96.151 127.0.0.1 ::1]

22[certs] Generating "etcd/healthcheck-client" certificate and key

23[certs] Generating "apiserver-etcd-client" certificate and key

24[certs] Generating "sa" key and public key

25[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

26[kubeconfig] Writing "admin.conf" kubeconfig file

27[kubeconfig] Writing "kubelet.conf" kubeconfig file

28[kubeconfig] Writing "controller-manager.conf" kubeconfig file

29[kubeconfig] Writing "scheduler.conf" kubeconfig file

30[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

31[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

32[kubelet-start] Starting the kubelet

33[control-plane] Using manifest folder "/etc/kubernetes/manifests"

34[control-plane] Creating static Pod manifest for "kube-apiserver"

35[control-plane] Creating static Pod manifest for "kube-controller-manager"

36[control-plane] Creating static Pod manifest for "kube-scheduler"

37[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

38[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

39[apiclient] All control plane components are healthy after 17.506804 seconds

40[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

41[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

42[upload-certs] Skipping phase. Please see --upload-certs

43[mark-control-plane] Marking the node node1 as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

44[mark-control-plane] Marking the node node1 as control-plane by adding the taints [node-role.kubernetes.io/master:PreferNoSchedule]

45[bootstrap-token] Using token: uufqmm.bvtfj4drwfvvbcev

46[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

47[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

48[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

49[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

50[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

51[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

52[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

53[addons] Applied essential addon: CoreDNS

54[addons] Applied essential addon: kube-proxy

55

56Your Kubernetes control-plane has initialized successfully!

57

58To start using your cluster, you need to run the following as a regular user:

59

60 mkdir -p $HOME/.kube

61 sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

62 sudo chown $(id -u):$(id -g) $HOME/.kube/config

63

64Alternatively, if you are the root user, you can run:

65

66 export KUBECONFIG=/etc/kubernetes/admin.conf

67

68You should now deploy a pod network to the cluster.

69Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

70 https://kubernetes.io/docs/concepts/cluster-administration/addons/

71

72Then you can join any number of worker nodes by running the following on each as root:

73

74kubeadm join 192.168.96.151:6443 --token uufqmm.bvtfj4drwfvvbcev \

75 --discovery-token-ca-cert-hash sha256:5814415567d93f6d2d41fe4719be8221f45c29c482b5059aec2e27a832ac36e6

上面记录了完成的初始化输出的内容,根据输出的内容基本上可以看出手动初始化安装一个Kubernetes集群所需要的关键步骤。 其中有以下关键内容:

[certs]生成相关的各种证书[kubeconfig]生成相关的kubeconfig文件[kubelet-start]生成kubelet的配置文件"/var/lib/kubelet/config.yaml"[control-plane]使用/etc/kubernetes/manifests目录中的yaml文件创建apiserver、controller-manager、scheduler的静态pod[bootstraptoken]生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到- 下面的命令是配置常规用户如何使用kubectl访问集群:

1mkdir -p $HOME/.kube 2sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config 3sudo chown $(id -u):$(id -g) $HOME/.kube/config - 最后给出了将节点加入集群的命令

kubeadm join 192.168.96.151:6443 --token uufqmm.bvtfj4drwfvvbcev \ --discovery-token-ca-cert-hash sha256:5814415567d93f6d2d41fe4719be8221f45c29c482b5059aec2e27a832ac36e6

查看一下集群状态,确认个组件都处于healthy状态,结果出现了错误:

1kubectl get cs

2Warning: v1 ComponentStatus is deprecated in v1.19+

3NAME STATUS MESSAGE ERROR

4scheduler Healthy ok

5controller-manager Healthy ok

6etcd-0 Healthy {"health":"true","reason":""}

集群初始化如果遇到问题,可以使用kubeadm reset命令进行清理。

2.3 安装包管理器helm 3 #

Helm是Kubernetes的包管理器,后续流程也将使用Helm安装Kubernetes的常用组件。 这里先在master节点node1上安装helm。

1wget https://get.helm.sh/helm-v3.9.0-linux-amd64.tar.gz

2tar -zxvf helm-v3.9.0-linux-amd64.tar.gz

3mv linux-amd64/helm /usr/local/bin/

执行helm list确认没有错误输出。

2.4 部署Pod Network组件Calico #

选择calico作为k8s的Pod网络组件,下面使用helm在k8s集群中安装calico。

下载tigera-operator的helm chart:

1wget https://github.com/projectcalico/calico/releases/download/v3.23.1/tigera-operator-v3.23.1.tgz

查看这个chart的中可定制的配置:

1helm show values tigera-operator-v3.23.1.tgz

2

3imagePullSecrets: {}

4

5installation:

6 enabled: true

7 kubernetesProvider: ""

8

9apiServer:

10 enabled: true

11

12certs:

13 node:

14 key:

15 cert:

16 commonName:

17 typha:

18 key:

19 cert:

20 commonName:

21 caBundle:

22

23resources: {}

24

25# Configuration for the tigera operator

26tigeraOperator:

27 image: tigera/operator

28 version: v1.27.1

29 registry: quay.io

30calicoctl:

31 image: docker.io/calico/ctl

32 tag: v3.23.1

定制的values.yaml如下:

1# 可针对上面的配置进行定制,例如calico的镜像改成从私有库拉取。

2# 这里只是个人本地环境测试k8s新版本,因此保留value.yaml为空即可

使用helm安装calico:

1helm install calico tigera-operator-v3.23.1.tgz -n kube-system --create-namespace -f values.yaml

等待并确认所有pod处于Running状态:

1kubectl get pod -n kube-system | grep tigera-operator

2tigera-operator-5fb55776df-wxbph 1/1 Running 0 5m10s

3

4kubectl get pods -n calico-system

5NAME READY STATUS RESTARTS AGE

6calico-kube-controllers-68884f975d-5d7p9 1/1 Running 0 5m24s

7calico-node-twbdh 1/1 Running 0 5m24s

8calico-typha-7b4bdd99c5-ssdn2 1/1 Running 0 5m24s

查看一下calico向k8s中添加的api资源:

1kubectl api-resources | grep calico

2bgpconfigurations crd.projectcalico.org/v1 false BGPConfiguration

3bgppeers crd.projectcalico.org/v1 false BGPPeer

4blockaffinities crd.projectcalico.org/v1 false BlockAffinity

5caliconodestatuses crd.projectcalico.org/v1 false CalicoNodeStatus

6clusterinformations crd.projectcalico.org/v1 false ClusterInformation

7felixconfigurations crd.projectcalico.org/v1 false FelixConfiguration

8globalnetworkpolicies crd.projectcalico.org/v1 false GlobalNetworkPolicy

9globalnetworksets crd.projectcalico.org/v1 false GlobalNetworkSet

10hostendpoints crd.projectcalico.org/v1 false HostEndpoint

11ipamblocks crd.projectcalico.org/v1 false IPAMBlock

12ipamconfigs crd.projectcalico.org/v1 false IPAMConfig

13ipamhandles crd.projectcalico.org/v1 false IPAMHandle

14ippools crd.projectcalico.org/v1 false IPPool

15ipreservations crd.projectcalico.org/v1 false IPReservation

16kubecontrollersconfigurations crd.projectcalico.org/v1 false KubeControllersConfiguration

17networkpolicies crd.projectcalico.org/v1 true NetworkPolicy

18networksets crd.projectcalico.org/v1 true NetworkSet

19bgpconfigurations bgpconfig,bgpconfigs projectcalico.org/v3 false BGPConfiguration

20bgppeers projectcalico.org/v3 false BGPPeer

21caliconodestatuses caliconodestatus projectcalico.org/v3 false CalicoNodeStatus

22clusterinformations clusterinfo projectcalico.org/v3 false ClusterInformation

23felixconfigurations felixconfig,felixconfigs projectcalico.org/v3 false FelixConfiguration

24globalnetworkpolicies gnp,cgnp,calicoglobalnetworkpolicies projectcalico.org/v3 false GlobalNetworkPolicy

25globalnetworksets projectcalico.org/v3 false GlobalNetworkSet

26hostendpoints hep,heps projectcalico.org/v3 false HostEndpoint

27ippools projectcalico.org/v3 false IPPool

28ipreservations projectcalico.org/v3 false IPReservation

29kubecontrollersconfigurations projectcalico.org/v3 false KubeControllersConfiguration

30networkpolicies cnp,caliconetworkpolicy,caliconetworkpolicies projectcalico.org/v3 true NetworkPolicy

31networksets netsets projectcalico.org/v3 true NetworkSet

32profiles projectcalico.org/v3 false Profile

这些api资源是属于calico的,因此不建议使用kubectl来管理,推荐按照calicoctl来管理这些api资源。 将calicoctl安装为kubectl的插件:

1cd /usr/local/bin

2curl -o kubectl-calico -O -L "https://github.com/projectcalico/calicoctl/releases/download/v3.21.5/calicoctl-linux-amd64"

3chmod +x kubectl-calico

验证插件正常工作:

1kubectl calico -h

2.5 验证k8s DNS是否可用 #

1kubectl run curl --image=radial/busyboxplus:curl -it

2If you don't see a command prompt, try pressing enter.

3[ root@curl:/ ]$

进入后执行nslookup kubernetes.default确认解析正常:

1nslookup kubernetes.default

2Server: 10.96.0.10

3Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

4

5Name: kubernetes.default

6Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

2.6 向Kubernetes集群中添加Node节点 #

下面将node2, node3添加到Kubernetes集群中,分别在node2, node3上执行:

1kubeadm join 192.168.96.151:6443 --token uufqmm.bvtfj4drwfvvbcev \

2 --discovery-token-ca-cert-hash sha256:5814415567d93f6d2d41fe4719be8221f45c29c482b5059aec2e27a832ac36e6

node2和node3加入集群很是顺利,在master节点上执行命令查看集群中的节点:

1kubectl get node

2NAME STATUS ROLES AGE VERSION

3node1 Ready control-plane,master 29m v1.24.0

4node2 Ready <none> 70s v1.24.0

5node3 Ready <none> 58s v1.24.0

3.Kubernetes常用组件部署 #

3.1 使用Helm部署ingress-nginx #

为了便于将集群中的服务暴露到集群外部,需要使用Ingress。接下来使用Helm将ingress-nginx部署到Kubernetes上。 Nginx Ingress Controller被部署在Kubernetes的边缘节点上。

这里将node1(192.168.96.151)作为边缘节点,打上Label:

1kubectl label node node1 node-role.kubernetes.io/edge=

下载ingress-nginx的helm chart:

1wget https://github.com/kubernetes/ingress-nginx/releases/download/helm-chart-4.1.2/ingress-nginx-4.1.2.tgz

查看ingress-nginx-4.1.2.tgz这个chart的可定制配置:

1helm show values ingress-nginx-4.1.2.tgz

对values.yaml配置定制如下:

1controller:

2 ingressClassResource:

3 name: nginx

4 enabled: true

5 default: true

6 controllerValue: "k8s.io/ingress-nginx"

7 admissionWebhooks:

8 enabled: false

9 replicaCount: 1

10 image:

11 # registry: k8s.gcr.io

12 # image: ingress-nginx/controller

13 # tag: "v1.1.0"

14 registry: docker.io

15 image: unreachableg/k8s.gcr.io_ingress-nginx_controller

16 tag: "v1.2.0"

17 digest: sha256:314435f9465a7b2973e3aa4f2edad7465cc7bcdc8304be5d146d70e4da136e51

18 hostNetwork: true

19 nodeSelector:

20 node-role.kubernetes.io/edge: ''

21 affinity:

22 podAntiAffinity:

23 requiredDuringSchedulingIgnoredDuringExecution:

24 - labelSelector:

25 matchExpressions:

26 - key: app

27 operator: In

28 values:

29 - nginx-ingress

30 - key: component

31 operator: In

32 values:

33 - controller

34 topologyKey: kubernetes.io/hostname

35 tolerations:

36 - key: node-role.kubernetes.io/master

37 operator: Exists

38 effect: NoSchedule

39 - key: node-role.kubernetes.io/master

40 operator: Exists

41 effect: PreferNoSchedule

nginx ingress controller的副本数replicaCount为1,将被调度到node1这个边缘节点上。这里并没有指定nginx ingress controller service的externalIPs,而是通过hostNetwork: true设置nginx ingress controller使用宿主机网络。

因为k8s.gcr.io被墙,这里替换成unreachableg/k8s.gcr.io_ingress-nginx_controller提前拉取一下镜像:

1crictl pull unreachableg/k8s.gcr.io_ingress-nginx_controller:v1.2.0

1helm install ingress-nginx ingress-nginx-4.1.2.tgz --create-namespace -n ingress-nginx -f values.yaml

1kubectl get pod -n ingress-nginx

2NAME READY STATUS RESTARTS AGE

3ingress-nginx-controller-7f574989bc-xwbf4 1/1 Running 0 117s

测试访问http://192.168.96.151返回默认的nginx 404页,则部署完成。

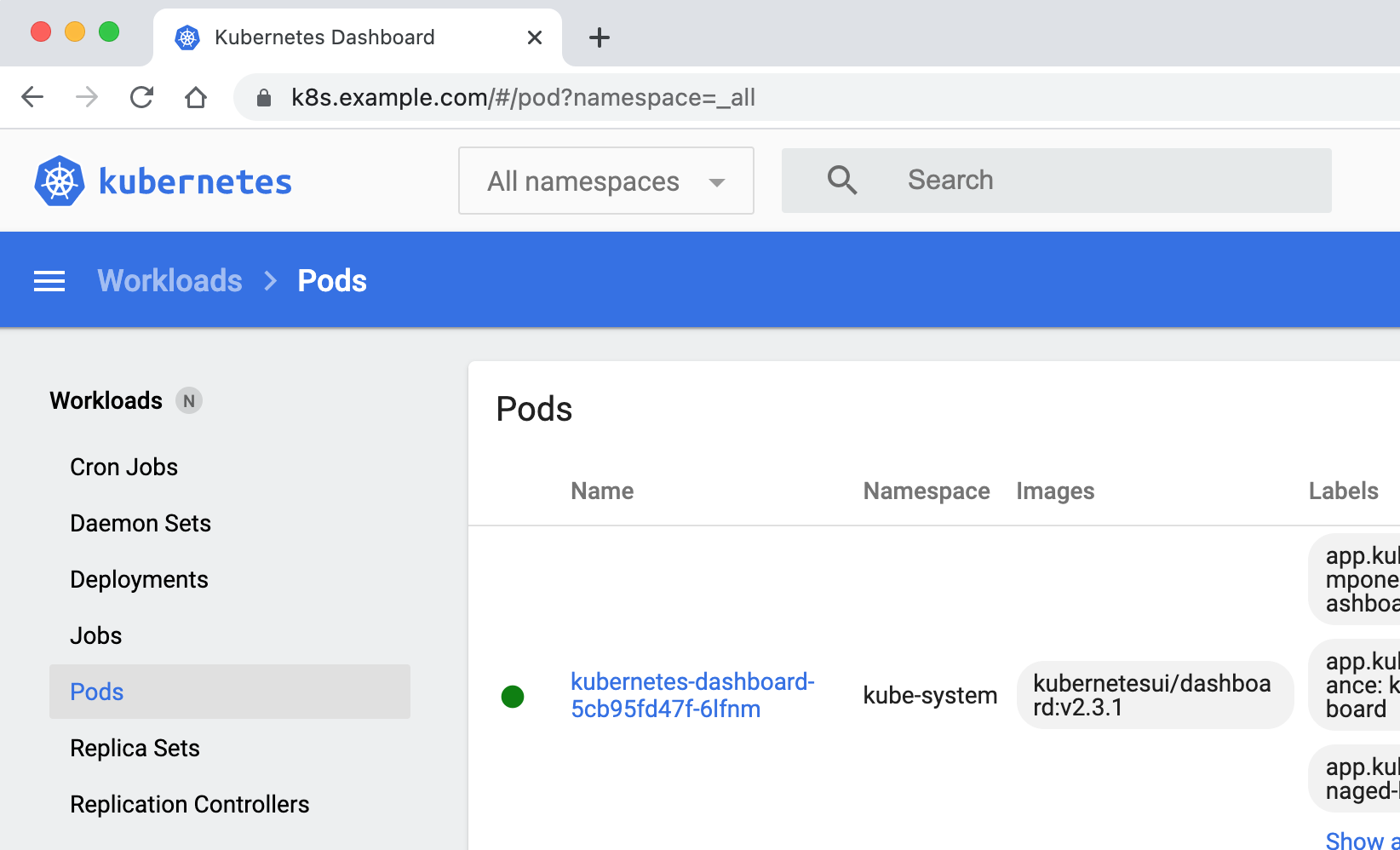

3.2 使用Helm部署dashboard #

先部署metrics-server:

1wget https://github.com/kubernetes-sigs/metrics-server/releases/download/metrics-server-helm-chart-3.8.2/components.yaml

修改components.yaml中的image为docker.io/unreachableg/k8s.gcr.io_metrics-server_metrics-server:v0.5.2。

修改components.yaml中容器的启动参数,加入--kubelet-insecure-tls。

1kubectl apply -f components.yaml

metrics-server的pod正常启动后,等一段时间就可以使用kubectl top查看集群和pod的metrics信息:

1kubectl top node

2NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

3node1 509m 12% 3654Mi 47%

4node2 133m 3% 1786Mi 23%

5node3 117m 2% 1810Mi 23%

6

7kubectl top pod -n kube-system

8NAME CPU(cores) MEMORY(bytes)

9coredns-74586cf9b6-575nl 6m 16Mi

10coredns-74586cf9b6-mbn8s 5m 17Mi

11etcd-node1 49m 91Mi

12kube-apiserver-node1 142m 490Mi

13kube-controller-manager-node1 38m 54Mi

14kube-proxy-k5lzs 26m 19Mi

15kube-proxy-rb5pf 9m 15Mi

16kube-proxy-w5zpk 27m 16Mi

17kube-scheduler-node1 7m 18Mi

18metrics-server-8dfd488f5-r8pbh 8m 21Mi

19tigera-operator-5fb55776df-wxbph 10m 38Mi

接下来使用helm部署k8s的dashboard,添加chart repo:

1helm repo add kubernetes-dashboard https://kubernetes.github.io/dashboard/

2helm repo update

查看chart的可定制配置:

1helm show values kubernetes-dashboard/kubernetes-dashboard

对values.yaml定制配置如下:

1image:

2 repository: kubernetesui/dashboard

3 tag: v2.5.1

4ingress:

5 enabled: true

6 annotations:

7 nginx.ingress.kubernetes.io/ssl-redirect: "true"

8 nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

9 hosts:

10 - k8s.example.com

11 tls:

12 - secretName: example-com-tls-secret

13 hosts:

14 - k8s.example.com

15metricsScraper:

16 enabled: true

先创建存放k8s.example.comssl证书的secret:

1kubectl create secret tls example-com-tls-secret \

2 --cert=cert.pem \

3 --key=key.pem \

4 -n kube-system

使用helm部署dashboard:

1helm install kubernetes-dashboard kubernetes-dashboard/kubernetes-dashboard \

2-n kube-system \

3-f values.yaml

确认上面的命令部署成功。

创建管理员sa:

1kubectl create serviceaccount kube-dashboard-admin-sa -n kube-system

2

3kubectl create clusterrolebinding kube-dashboard-admin-sa \

4--clusterrole=cluster-admin --serviceaccount=kube-system:kube-dashboard-admin-sa

创建集群管理员登录dashboard所需token:

1kubectl create token kube-dashboard-admin-sa -n kube-system --duration=87600h

2

3eyJhbGciOiJSUzI1NiIsImtpZCI6IlU1SlpSTS1YekNuVzE0T1k5TUdTOFFqN25URWxKckt6OUJBT0xzblBsTncifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxOTY4OTA4MjgyLCJpYXQiOjE2NTM1NDgyODIsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJrdWJlLWRhc2hib2FyZC1hZG1pbi1zYSIsInVpZCI6IjY0MmMwMmExLWY1YzktNDFjNy04Mjc5LWQ1ZmI3MGRjYTQ3ZSJ9fSwibmJmIjoxNjUzNTQ4MjgyLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06a3ViZS1kYXNoYm9hcmQtYWRtaW4tc2EifQ.Xqxlo2vJ9Hb6UUVIqwvc8I5bahdxKzSRSaQI_67Yt7_YEHmkkHApxUGlwJYTKF9ufww3btlCmM8PtRn5_Q1yv-HAFyTOYKo8WHZ9UCm1bT3X8V8g4GQwZIl2dwmlUmKb1unBz2-em2uThQ015bMPDE8a42DV_bOwWjljVXat0nwV14nGorC8vKLjXbohrIJ3G1pgCJvlBn99F1RelmSUSQLlolUFoxpN6MamYTElwR6FfD-AGmFXvZSbcFaqVW0oxJHV70Gjs2igOtpqHFxxPlHT8aQzlRiybPtFyBf9Ll87TmVJimT89z8wv2si2Nee8bB2jhsApLn8TJyUSlbTXA

使用上面的token登录k8s dashboard。